9.2 Symbolic Methods

This section under major construction.

Symbolic integration.

In introductory calculus, we learn various rules for differentiating and integrating functions. Differentiating is a mechanical process with a half dozen or so general purpose rules.- Sum and difference rule. (f(x) ± g(x))′ = f′(x) ± g′(x).

- Product rule. (f(x) g(x))′ = f(x) g′(x) + g(x)f′(x).

- Quotient rule. (f(x)/g(x))′ = (f′(x) g(x) - g′(x) f(x)) / g2(x).

- Power rule. (xk)′ = kxk-1.

- Chain rule. (f(g(x))′ = f(g(x)) g′(x).

There are also some rules for special functions that are typically derived once and then memorized, e.g., the derivative of sin(x) is cos(x); the derivative of exp(x) is exp(x); the derivative of ln(|x|) is 1/x; the derivative of sec(x) is sec(x) tan(x); the derivative of arcsin(x) is (1 - x2)-1/2.

On the other hand, we learn that indefinite integration is a much harder problem. Calculus students typically learn a set of ad hoc pattern matching rules for finding the antiderivative of a function of one variable. (Below, we assume constant term of antiderivative is zero.)

- Constant rule. The antiderivative of cf(x) is the product of c and the antiderivative of f(x).

- Sum rule. The antiderivative of f(x) + g(x) is the sum of the antiderivatives of f(x) and g(x).

- Polynomials. The antiderivative of f(x) = xb (for b &neq; 0 and b &neq; 1) is xb-1 / (b-1). By combining this rule with the two previous ones, we can determine the antiderivative of any polynomial.

- Table lookup. Memorize the antiderivatives of a variety of simple functions. Ex: sin(x), tan(x), arctan(x). Ex: Antiderivative of ax is ax / ln a.

- Substitution. Often useful when f(x) = g(h(x)). Ex: f(x) = sin(√x) or f(x) 1/(1 + x^2) then substitute x = tan t. It's not always obvious what you should substitue. Often when you spot a term like sqrt(x2 ± a2), then a trig substituion helps.

- Integration by parts. Antiderivative of f(x)g′(x) equals f(x)g(x) minus the antiderivative of g(x)f′(x). Need to get lucky to have function break up into required terms. Also need to recognize the right pattern. Ex: anitderivative of x ex or x2 sin x. Interesting example that stresses ad hocness: f(x) = ex cos x and f(x) = ln(x).

- Logarithm rule. If f(x) = g′(x) / g(x), then the antiderivative of f(x) is ln(|g(x)|). Ex: f(x) = tan(x) = sin(x) / cos(x) = -g'(x) / g(x), where g(x) = cos(x). Thus, the antiderivative of f(x) is ln|sec(x)|.

- Partial fraction decomposition. Ex: f(x) = (x4 - x3) / (x2 + 2)(x - 3). Reference. Need to divide polynomials so that degree of denominator is not less than that of numerator. Need to factor and reduce polynomials to simplest form. (Generalization of Euclid's algorithm.) Need to solve a system of linear equations. Multiple roots makes things more complicated. Method enables us to integrate all fractions of two polynomials.

- More ad hoc rules. These rules are not sufficient. Ex: f(x) = 1 / (x3 + x + 1).

Writing a computer program to perform symbolic integration appears to be a daunting task. In the early 1960s, only humans could find the indefinite integral of a function, except in the most trivial of cases. One approach is to mimic the method taught in introductory calculus classes - build a huge table of known integrals and try to pattern match. In the 1800s Liouville sought an algorithm for integrating elementary functions. In the 19th centruy, Hermite discovered an algorithm for integrating rational functions - used partial fractions as basic primitive. An elementary function is one that can be obtained from rational-valued functions by a finite sequence of nested logarithm, exponential, and algebraic numbers or functions. Since √-1 is elementary, all of the "usual" trig and inverse trig functions (sin, cos, arctan) fall into this category since they can be re-expressed using exponentials and logarithms of imaginary numbers. Not all elementary functions have elementary indefinite integrals, e.g., f(x) = exp(-x2), f(x) = sin(x2), f(x) = xx, f(x) = sqrt(1 + x3).

Finding a finite method for integrating an elementary function (if it exists) was the central problem in symbolic integration for many decades. Hardy (1916) stated that "there is reason to suppose that no such method can be given", perhaps foreshadowing Turing's subsequent results on undecidability. In 1970, Robert Risch solved the problem, providing a provably correct and finite method for integrating any elementary function whose indefinite integral is elementary. (Actually, his method is not universally applicable. To apply it, you need to solve a hard differential equation. Lots of effort has gone into solving this differential equation for a variety of elementary functions.) Refinements of this method are commonplace in modern symbolic algebra systems like Maple and Mathematica. Work also extended to handle some "special functions." Relies on deep ideas from algebra and number theory. These techniques have enabled mathematicians to find new integrals that were previous not known or tabulated, and also to correct a mistakes in well-known collections of integrals! For exceptionally curious readers, here is a symbol integration tutorial.

Polynomials.

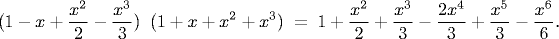

Polynomials are a very special type of elementary functions. Our goal is to be able to write programs that can manipulate polynomials and perform computations such as:

We also want to be able to evaluate the polynomial for a given value of x. For x = 0.5, both sides of this equation have the value 1.1328125. The operations of multiplying, adding, and evaluating polynomials are at the heart of a great many mathematical calculations. Many applications for simple operations (add, multiply), and surprising applications for more complicated operations (division, gcd), e.g., Sturm's algorithm for find the number of real roots of a polynomial in a given interval, solving systems of polynomial equations, Groebner bases. Widely used in systems and control theory since Laplace transform of common signals results in ratio of two polynomials.

Polynomial API. The first step is to define the API for the polynomial ADT. We begin with polynomials whose coefficients and exponents are integers. For a well-understood mathematical abstraction such as a polynomial, the specification is so clear as to be unspoken: We want instances of the ADT to behave precisely in the same manner as the well-understood mathematical abstraction. Immutable.

A sample client. Program Binomial.java reads in an integer N from the command line and prints out the expansion of (1+x)N.

Implementation. Program Polynomial.java represents a univariate polynomial of degree deg using an integer array coef[] where coef[i] records the coefficient of xi.

We provide one constructor which takes two arguments a and b and creates the monomial axb. The helper method degree() computes the actual degree of the polynomial (zero if a is zero).

public class Polynomial { private int[] coef; // coefficients private int deg; // degree of polynomial

To add two polynomials a and b, we loop through the two arrays and add their coefficients. The maximum degree of the resulting polynomial is a.deg + b.deg. We initialize c to be the polynomial of degree N with all zero coefficients. We are careful to maintain the invariant that c.deg is the actual degree of the polynomial (which might be different from a.deg + b.deg if the leading coefficients of the two summands cancel each other out).

public Polynomial(int a, int b) { coef = new int[b+1]; coef[b] = a; deg = degree(); }

To multiply two polynomials, we use the elementary algorithm based on the distributive law. We multiply one polynomial by each term in the other, line up the results so that powers of x match, then add the terms to get the final result.

public Polynomial plus(Polynomial b) { Polynomial a = this; Polynomial c = new Polynomial(0, Math.max(a.deg, b.deg)); for (int i = 0; i <= a.deg; i++) c.coef[i] += a.coef[i]; for (int i = 0; i <= b.deg; i++) c.coef[i] += b.coef[i]; c.deg = c.degree(); return c; }

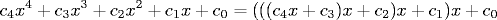

To evaluate a polynomial at a particular point, say x = 3, we can multiply each coefficient by the appropriate power of x, and sum them all up. The implementation of the evaluate uses a direct optimal algorithm known as Horner's method, which is based on parenthesizations

The following code fragment performs polynomial evaluation using Horner's method.

public int evaluate(int x) { int p = 0; for (int i = deg; i >= 0; i--) p = coef[i] + (x * p); return p; }

Rational arithmetic.

Program Rational.java is an abstract data type for nonnegative rational numbers. It implements the following interface. To reduce fractions, we use Euclid's greatest common divisor algorithm as a subroutine to find the least common multiple (lcm) of two integers.

Arbitrary precision arithmetic.

The java.math library provides two ADTs BigInteger and BigDecimal that provide support for arbitrary precision arithmetic.Maple.

Maple is a popular system for symbolic mathematical computation. It was developed by a research group at the University of Waterloo, and is available at many Universities. It can be used for calculus, linear algebra, abstract algebra, differential equations, plotting functions, and numerical calculations. It is also a general purpose programming language with conditionals, loops, arrays, and functions.The following sessions illustrates basic arithmetic and built-in functions. Note the the answers given are exact, and no floating point approximations are made unless we explicitly convert to floating point. All statements end with a semicolon (in which case the result is printed to the screen) or a colon (in which case the result is suppressed).

% maple |\^/| Maple V Release 5 (WMI Campus Wide License) ._|\| |/|_. Copyright (c) 1981-1997 by Waterloo Maple Inc. All rights \ MAPLE / reserved. Maple and Maple V are registered trademarks of <____ ____> Waterloo Maple Inc. | Type ? for help. > # arithmetic > 1 + 1; 2 > # arbitrary precision arithmetic > 2^(100); 1267650600228229401496703205376 > # rational arithmetic > # trigometric function > sin(Pi/6); 1/2 > # exponential function > exp(1); exp(1) # convert to floating point > exp(1.0); 2.718281828 > # 50 digits of precision > Digits := 50: > exp(1.0); 2.7182818284590452353602874713526624977572470937000 > Digits := 10: > # an error > 1 / 0; Error, division by zero > # complex numbers > (6 + 5*I)^4; -3479 + 1320 I # built-in functions > BesselK(1.0, -3.0); -.04015643113 - 12.41987883 I > # base 10 logarithm > log[10](100); ln(100) ------- ln(10) # simplifying > simplify(log[10](100)); 2 # end the session quit;

One of the most powerful features of Maple is its support of symbolic variables. Maple uses := for assignment statements since = is reserved for mathematical equality.

# assignment statements > m := 10: > a := 9.8: > f := m * a; f := 98.0 > i := 10: > i := i + 1; i := 11 > # polynomials > expand((x+1)^6); 6 5 4 3 2 x + 6 x + 15 x + 20 x + 15 x + 6 x + 1 > # differentiation > diff(sin(x*x), x); 2 2 cos(x ) x > # partial differentiation > diff((x^2 - y) / (y^3 - 1), x); x 2 ------ 3 y - 1 > # indefinite integration > int(x^3 * sin(x), x); 3 2 -x cos(x) + 3 x sin(x) - 6 sin(x) + 6 x cos(x) > # definite integration > int(exp(-x^2), x = 0..infinity); 1/2 1/2 Pi > # series summation > sum(i, i = 1..100); 5050 factor(sum(i, i = 1..N)); 1/2 N (N + 1)

Equation solving, arrays, conditionals, loops, functions, libraries, matrices,

> # solve equation > solve(x^4 - 5*x^2 + 6*x = 2); 1/2 1/2 -1 + 3 , -1 - 3 , 1, 1 > # solving system of equations > solve({x^2 * y^2 = 0, x - y = 1}); {y = -1, x = 0}, {y = -1, x = 0}, {y = 0, x = 1}, {y = 0, x = 1} > # find floating point solutions > fsolve(x^4 * sin(x) + x^3*exp(x) - 1); .7306279509 > # maximize > # user-defined functions > f := x -> x^2: > f(9); 81 > g := x -> sin(x) * exp(x): > f(g(Pi/6)); 2 1/4 exp(1/6 Pi) > diff(f(g(x)), x); 2 2 2 2 sin(x) exp(x) cos(x) + 2 sin(x) exp(x) > # absolute value function > f := proc(x) if x > 0 then x else -x fi; end: > f(-7); 7 > f(7); > # recursion > mygcd := proc(p, q) if q = 0 then p else mygcd(q, p mod q) fi; end: > mygcd(1440, 408); 24 > # loops and conditionals > # print primes of the form 2^i - 1 > for i from 1 to 600 do if isprime(2^i - 1) then print(i); fi; od; 2 3 5 7 13 17 19 31 61 89 107 127 521 > # arrays - Chebyshev polynomials (expand to keep it unfactored) > p[0] := 1; > p[1] := x; > for i from 2 to 10 do p[i] := expand(2*x*p[i-1] - p[i-2]) od; > p[10]; 10 8 6 4 2 512 x - 1280 x + 1120 x - 400 x + 50 x - 1 > subs(x = 1/6, p[10]); 12223 ------ 118098

garbage collection, variables are global so must be careful not to reuse - can reset with x := 'x'.

Integration in Maple. When you integrate a function in Maple, it tries a number of different integration methods.

- Polynomials.

- Table lookup.

- Heuristics: substitutions, integration by parts, partial fractions, special forms involving trig and polynomials

- Risch algorithm: Horowitz reduction, Lazard/Rioboo/Trager method

To witness Maple in action,

> infolevel[int] := 2; > int(1 / (x^3 + x + 1), x); int/indef1: first-stage indefinite integration int/ratpoly: rational function integration int/rischnorm: enter Risch-Norman integrator bytes used=1000360, alloc=786288, time=0.14 int/rischnorm: exit Risch-Norman integrator int/risch: enter Risch integration int/risch: the field extensions are [x] unknown: integrand is 1 ---------- 3 x + x + 1 int/ratpoly/horowitz: integrating 1 ---------- 3 x + x + 1 int/ratpoly/horowitz: Horowitz' method yields / | 1 | ---------- dx | 3 / x + x + 1 int/risch/ratpoly: Rothstein's method - factored resultant is 3 [[z - 3/31 z - 1/31, 1]] int/risch/ratpoly: result is ----- \ 2 ) _R ln(x - 62/9 _R + 31/9 _R + 4/9) / ----- _R = %1 3 %1 := RootOf(31 _Z - 3 _Z - 1) int/risch: exit Risch integration ----- \ 2 ) _R ln(x - 62/9 _R + 31/9 _R + 4/9) / ----- _R = %1 3 %1 := RootOf(31 _Z - 3 _Z - 1)

Using Maple.

Q + A

Q. When I get an error in Maple, I don't get back to the Maple prompt.

A. Try typing a semicolon followed by return.

Exercises

- Modify the toString() method of Rational so that it suppresses the denominator if it is 1, e.g., 5 instead of 5/1.

- Add isOdd() and isEven() methods to the polynomial ADT to indicate if the polynomial is odd (all nonzero coefficients have odd exponents) or even (all nonzero coefficients have even exponents).

- Add equals() and compareTo() methods to Rational.

- Modify the toString() method of Polynomial so that it suppresses the exponent in the x^1 term and the x^0 in the constant term. Some boundary cases to check: f(x) = 0, 1, and x.

- Add an equals() method to Polynomial.

- Stave off overflow in Rational using the following ideas: .... Check for overflow in Rational and throw an exception if the result will overflow.

- Add a method minus() to Rational and support for negative rational numbers.

- Write a program Taylor.java that creates a polynomial (of rational coefficients) containing the first 10 terms of the Taylor expansion of e^x, sin x and e^x sin x.

- Expand (1-x)(1-x^2)(1-x^3)(1-x^4)...(1-x^n). When n = 3, this is 1 -x - x^2 + x^4 + x^5 - x^6. In the limit, all of the coefficients are 0, +1, or -1.

Creative Exercises

- Chebyshev polynomials.

The Chebyshev polynomials are defined by solutions to

the equation

Although the solution appears to be trigonometric, its solution is a polynomial in x. The first few such polynomials are given below. In general T(n) = 2x * T(n-1) - T(n-2).Tn(x) = cos(n arccos x)

The Chebyshev polynomials have many special arithmetic properties which makes them useful mathematical objects in interpolation theory, approximation theory, numerical integration, ergodic theory, number theory, signal processing, and computer music. They also arise from the differential equation:T0(x) = 1 T1(x) = x T2(x) = 2x2 - 1 T3(x) = 4x3 - 3x

Write a program Chebyshev.java that takes a command-line parameter N and prints out the first N Chebyshev polynomials.(1 - x2) y'' - x y' + n2y = 0

- Hermite polynomials.

Write a program Hermite.java that

takes an integer input N and prints out the first N Hermite polynomials.

The first few Hermite polynomials are given below.

In general H(n) = 2x * H(n-1) - 2(n-1) * H(n-2).

H(0) = 1 H(1) = 2x H(2) = 4x2 - 2 H(3) = 8x3 - 12x

- Fibonacci polynomials.

Write a program Fibonacci.java

that takes an integer input N and prints out the first N Fibonacci polynomials.

The first few Fibonacci polynomials are given below.

In general F(n) = xF(n-1) + F(n-2).

How does the sequence relate to the Fibonacci sequence? Hint: evaluate the polynomials at x = 1.F(1) = 1 F(2) = x F(3) = x2 + 1 F(4) = x3 + 2x

- Composition. Add method for composing two polynomials, e.g., f.compose(g) shoudl return the polynomial f(g(x)).

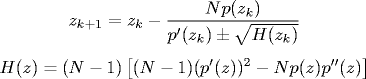

- Laguerre's method.

Write a program to find a real or complex root of a polynomial using

Laguerre's method. Given a polynomial p(z) of degree N and a complex

starting estimate z0, apply the following update rule until

convergence.

Choose the sign of the term in the denominator to minimize |zk+1 - zk|. Laguerre's method has superior global convergence properties to Newton's method, and guarantees to converge to a root if the polynomial has only real roots.

- Farey sequence.

The Farey sequence of

order N is the increasing sequence of all rational numbers (in lowest common form)

between 0 and 1 whose numerator and denominator are integers between 0 and N.

1: 0/1 1/1 2: 0/1 1/2 1/1 3: 0/1 1/3 1/2 2/3 1/1 4: 0/1 1/4 1/3 1/2 2/3 3/4 1/1 5: 0/1 1/5 1/4 1/3 2/5 1/2 3/5 2/3 3/4 4/5 1/1

Write a program Farey.java that takes a command line parameter N and prints the Farey sequence of order N. Use the rational number data data type created above.

To compute the Farey sequence, you can use the following amazing relationship: If m/n and m'/n' are two consecutive elements in the Farey sequence of order N, then the next element is m''/n'' which can be computed as follows (where the division is integer division):

m'' = ((n + N) / n') * m' - m n'' = ((n + N) / n') * n' - n

- Best rational approximation.

Newscasters often try to simplify unwieldy ratios like 0.4286328721345 to a

nearby rational number with small numerator and denominator like 4/7.

What is the best way to do this? The answer depends on the size of the

denominator that you're willing to tolerate, so our goal is to list

the best approximations and let the reporter choose the desired one.

Here are the best few rational approximations to the mathematical constant e:

0/1 1/1 2/1 3/1 5/2 8/3 11/4 19/7 49/18 68/25 87/32 106/39 193/71 685/252 878/323 1071/394

Although 27/10 is a decent approximation to e, it is excluded from the list since 19/7 provides a better approximation with an even smaller denominator. The Stern-Brocot tree method gives an elegant mathematical solution. Here's the algorithm for generating the best upper and lower rational approximations to a real number x:

- Set the left endpoint to 0/1 and the right endpoint to 1/0.

- Compute the mediant of left and right endpoints. The mediant of two rationals a/b and c/d is (a+c)/(c+d).

- If the mediant is equal to x (up to machine precision) stop. Otherwise, if the mediant is less than x, set the right endpoint to the mediant. Otherwise, set the left endpoint to the mediant.

As you iterate the above procedure, print out each new term if it provides a better approximation. Write a program RationalApprox.java to print out these best rational approximations.

- Continued fraction.

A continued fraction is an expression of the form

a0 + 1 / (a1 + 1 / ( a2 + 1 /a3) where

ai are integers.

- Given a continued fraction expansion a0, a1, ..., an, write a program to determine what rational number it corresponds to.

- Given a rational number, find its continued fraction expansion. For example 159/46 = 3 + 21/46 = 3 + 1 / (46/21) = 3 + 1 / (2 + 4/21) = 3 + 1 / (2 + 1 / (21/4)) = 3 + 1 / (2 + 1 / (5 + 1/4)).

- Arbitrary precision rational arithmetic. Write an ADT BigRational.java that imlplements arbitrary precision rational numbers. Hint: re-implement Rational.java, but use BigInteger instead of int to represent the numerator and denominator.

- Complex rational numbers. Implement a data type RationalComplex.java ComplexRational that supports complex numbers where the real and imaginary parts are rational numbers. Use it for deeply zoomed plots of the Mandelbrot set to avoid floating point precision. (Also check for cycles in the Mandelbrot sequence using the doubling trick.)

- Polynomial degree. Add a method degree() that computes the degree of a polynomial. Warning: this may not equal the size of the array if we subtract off two polynomials that original had degree 10, we may end up with a polynomial of degree 4.

- Rational polynomials. Create an ADT RationalPolynomial.java for rational polynomials using arbitrary precision rational coefficients. Include a method integrate(int a, int b) that that integrates the invoking polynomial from a to b and returns the resulting rational number.

- Polynomial division.

Write methods div() and rem() for division

of two polynomials with rational coefficients. Use the following grade

school method to compute the quotient and remainder of dividing

u(x) into v(x), assuming v(x) is not zero. The quotient q(x)

and remainder r(x) are polynomials which satisfy

u(x) = q(x) v(x) + r(x) and degree(r(x)) < degree(v(x)).

- If degree(u(x)) < degree(v(x)) return a quotient of zero.

- Multiply v(x) by axb such that u'(x) = u(x) - v(x)axb has degree less than degree(u(x)) and the highest degree terms cancel out

- Return a quotient of axb + u'(x) / v(x), where the division is computed recursively

- To compute the remainder, first compute the quotient q(x), then return the remainder r(x) = u(x) - q(x) v(x).

- Sturm's algorithm.

Sturm's algorithm

is an elegant method to determine the number

of real roots of a rational polynomial over a given interval.

Given a polynomial p(x), we define the Sturm chain as follows:

- f0(x) = p(x)

- f1(x) = p'(x)

- fn(x) = fn-1(x) % fn-2(x) where % is polynomial remainder

- Polynomial gcd. Implement Euclid's algorithm on rational polynomials to find the greatest common divisor of two polynomials. Use the division algorithm from the previous exercise. As with Euclid's algorithm for integers, we can use the following recurrence gcd((u(x), v(x)) = gcd(v(x), r(x)) where r(x) is u(x) % v(x) as defined in the previous exercise. The base case is gcd(u(x), 0) = u(x).

- Polynomial implementation. Suppose you need fast access to the individual coefficients, but the polynomial is sparse. Use a symbol table to store the coefficients. Probably BST so that you can print coefficients in order or to get the max degree.

- Aribtrary precision integer arithmetic. Develop your own library MyBigInteger that implements aribitrary precision integer arithmetic, just as in the Java library BigInteger.

- Fibonacci numbers. Write a program Fibonacci.java to compute Fibonacci numbers using big integers. Use Dijkstra's recurrence.

- Transfer function. A transfer function models how the output of a system changes according to the input. Transfer functions arise in all areas of engineering, and can model the speaker in cell phone, the lens of a camera, or a nuclear reactor. The transfer functions associated with seismometers (and many other mechanical and analog electronic systems) are ratios of two (frequency domain) polynomials with real coefficients p(s) / q(s). Such transfer functions arise frequently in systems and control theory since the Laplace transform of common signals results in ratio of two polynomials. Zeros are values where numerator is 0, poles are values where denominator is 0. Poles are zeros are of fundmanental importance in understanding the behavior of the underlying system. Poles govern stability. If a system is stable and the input is changed, the output will converge to a constant value; if it is unstable (e.g., nuclear reactor at Chernobyl) the output will grow or fall indefinitely. If system is stable, then the real parts of all poles are positive. Zeros affect design of feedback controller. Ex: (3z - 1) / (z - 1/3)(z^2 - 1), 30(z-6)/ z(z^2+4z+13), (s+1)(s^2 + s + 25)/ s^2(s+3)(s^2+s+36).

- Arbitrary precision square root. Write a program ArbitraryPrecisionSqrt.java that takes two integer command-line arguments x and n, and prints the square root of x, with n digits of precision. Use Newton's method and java.math.BigDecimal. Newton's method converges quadratically: each Newton step doubles the number of digits of accuracy.